“We overestimate the impact of technology in the short-term and underestimate the effect in the long run.”

- Ray Amara, Stanford computer scientist Tweet

Simfoni’s Chief Technology Officer Alan Buxton shares insights into expectations around the capabilities of AI and what it means for procurement.

ChatGPT has brought ‘Artificial Intelligence’ and specifically, ‘Generative AI’ well into the mainstream. This is a world-changing time. (It must have felt like this when the printing press came along!) It’s impressive how quickly Large Language Models and Generative AI have caught the world’s imagination.

At Simfoni, we’ve been working intensively with transformers—the technology that underpins ChatGPT, since the middle of 2021 and have become familiar with both the strengths and pitfalls of this technology. As we move at pace through Gartner’s Hype Cycle, I want to share some insights on the current “hype” surrounding AI to help you navigate up the peak of inflated expectations (where we are now)—and through the inevitable trough of disillusionment that is coming. As exciting and transformative as AI may seem, navigating it in the world of procurement requires grounded understanding to avoid falling into disappointment.

“AI in sourcing and procurement remains overhyped, with use cases throughout the S2P process. Most use cases are still emerging, but reliability on and adoption of longer-standing use cases is increasing. Maturity is highly variable depending on the use case, which is reflected across AP invoice automation (APIA), conversational UI, autonomous sourcing and autonomous procurement.”

Source: Gartner Hype Cycle for Procurement & Sourcing Solutions, 2022. By Analyst(s): Kaitlynn Sommers, Micky Keck, Patrick Connaughton, Lynne Phelan

To begin, let’s be clear about what people mean when they say “AI”. What most people think of AI is very different from what people researching or working in AI know it to be. Close your eyes for a few moments and see what comes to mind when you think of “AI”. Did you think of something out of a sci-fi film with a conscious machine that looks and acts like a human but isn’t? Bad news if you did: That’s not what the industry has in mind when they say “AI”. That’s what we call “Artificial General Intelligence”, or AGI. If I had my way, we would replace ‘AI’ with a more accurate term like ‘Assistive Computation’ as proposed by Ilyaz Nasrullah. This would eliminate a lot of confusion around the scope, function, and value of AI in procurement.

Read more: Guide to AI in Procurement

What is ‘AI’ to AI Professionals?

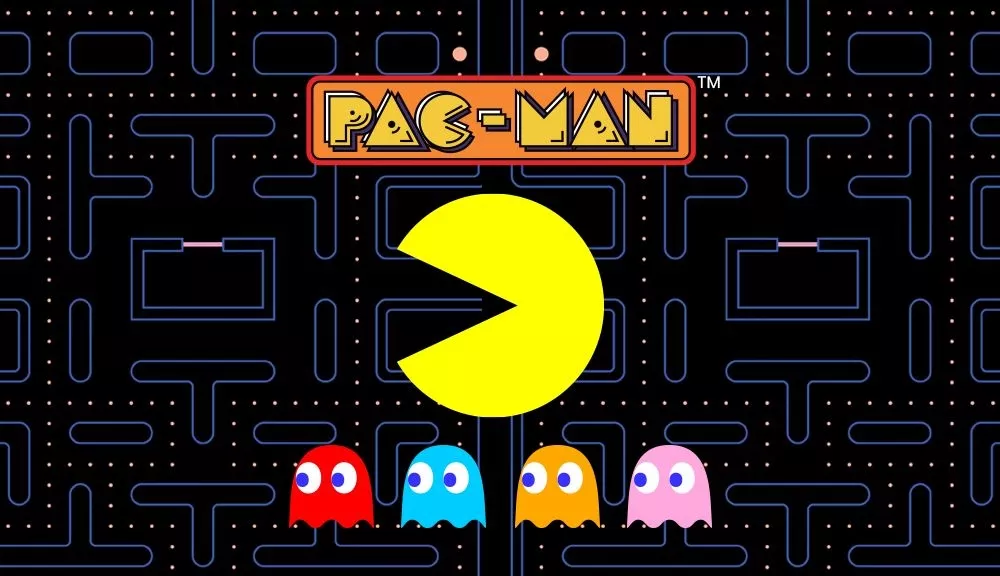

Are you familiar with Pac-Man? The video game with the round yellow creature that must eat the dots while avoiding brightly-colored ghosts? Those ghosts are AI. Yep, you read that right. The ghosts follow simple rules about when to chase Pac-Man, which is essentially AI. (You can read all about the clever AI algorithms that power them here).

To researchers in the field, AI is something that operates independently to achieve an objective. This could be anything from catching Pac-Man to predicting stock quantity fluctuations. That’s it. There is no implication of knowing or understanding anything other than the task at hand.

Keep this in the back of your mind as we come to OpenAI’s tool, ChatGPT. You can think of ChatGPT as the combination of two bits of tech: a chat engine, and the GPT language model. GPT is a proprietary language model created by OpenAI. Other companies like Meta, Google, Nvidia, and Microsoft each have similar language models. All these language models are fed vast quantities of text to identify patterns and predict what word will come next. Think of them as auto-complete on steroids.

The “T” in GPT is for “Transformers”, which expands on traditional machine learning methodologies to allow for a broader range of understanding and context. Transformers are what make GPT models so advanced and effective at generating natural-sounding language, and ultimately what led to OpenAI’s breakthrough. Transformers enhance the GPT language model by training it to create text that reads and feels more human-like through a technique called “Reinforcement Learning through Human Feedback” or RLHF. (Basically, having human input helps the algorithm learn what text looks natural and which doesn’t). Then, OpenAI added a Chat interface on top, making it easier to interact with the language model. And the result is ChatGPT – an impressively intelligent AI tool that can both generate text on a wide variety of topics and hold convincing conversations with humans.

So, here we have tech (in the tradition of the Pac-Man ghosts) that has identified patterns in written text and can generate human-like text with which you can converse.

Now, let’s de-mystify its capabilities.

Does ChatGPT really “know” anything? Arguably, it knows what human-like text looks like. But it doesn’t know anything about what it is writing. It is optimized to generate content that is structured in a way that looks human. It is not optimized for creating anything true.

What does this mean for you? I’m going to borrow from a recent paper on the use of ChatGPT in Environmental Research, which has a good summary of the pros and cons of ChatGPT. They found that ChatGPT’s main advantages are:

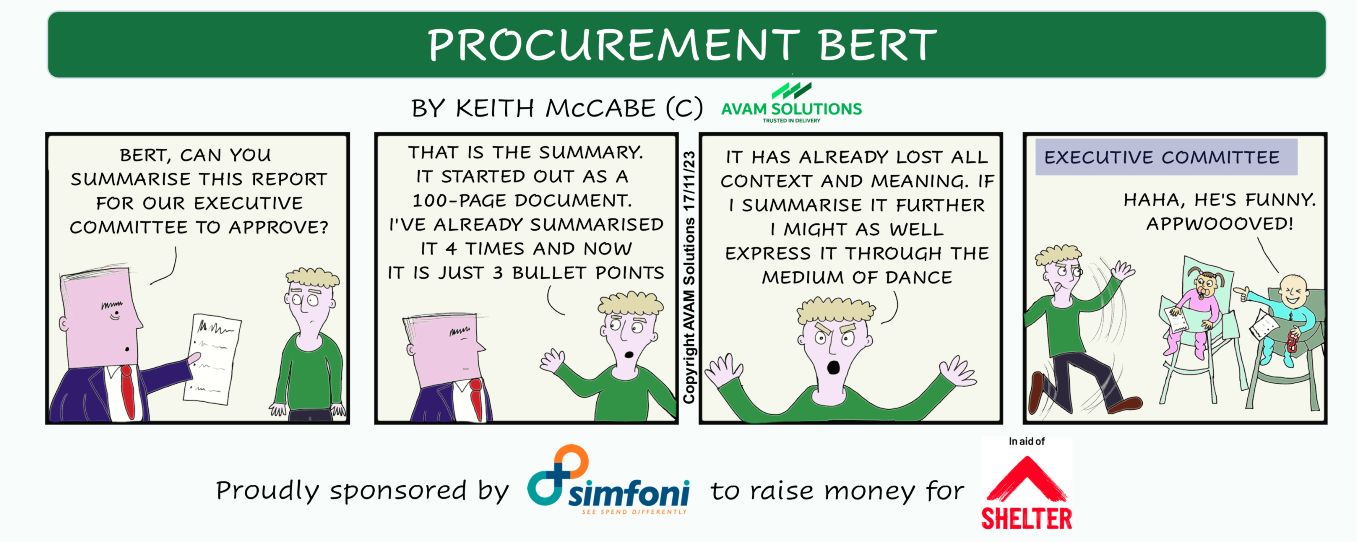

- Writing Improvement, Key Points, and Theme Identification, specifically translating text, summarizing text & extracting key themes (e.g., “summarize this document into a series of bullet points”), polishing text (e.g., “turn these bullet points into a professional email”).

- Sequential Information Retrieval. You can ask about a topic and then keep asking follow-up questions to find out more. They found this particularly useful for junior researchers who wanted to get a broad understanding of a topic.

- Coding, Debugging, and Syntax Explanation. Whether you’re writing in C++ or trying to get a formula right in Excel, ChatGPT can help explain code and help you debug.

On the flip side, they found the following limitations and risks:

- Fabricated Information and Lack of Updated Domain Knowledge. In other words, it makes stuff up. ChatGPT is optimized to generate text that looks grammatically correct to a human being. It isn’t optimized for truth or reality. It’s a large language model: it has no concept of truth or reality. It’s just words, one after another.

- Lack of Accountability in Decision Making. You cannot hold ChatGPT (let alone ChatGPT’s maker, OpenAI) accountable for any of the text it generates, so don’t put ChatGPT into a position where it is acting as a decision-maker.

- Opportunity Cost of Relying on ChatGPT. ChatGPT’s strengths in explaining a topic come with risks if you end up relying more and more on ChatGPT alone. They recommend thinking of ChatGPT as an assistant rather than as a substitute.

To put it another way regarding its risks, ChatGPT gives you an unlimited number of interns who are keen to help but still inexperienced, so perhaps they won’t get everything right. And you wouldn’t expect interns to handle critical strategy or do your thinking for you.

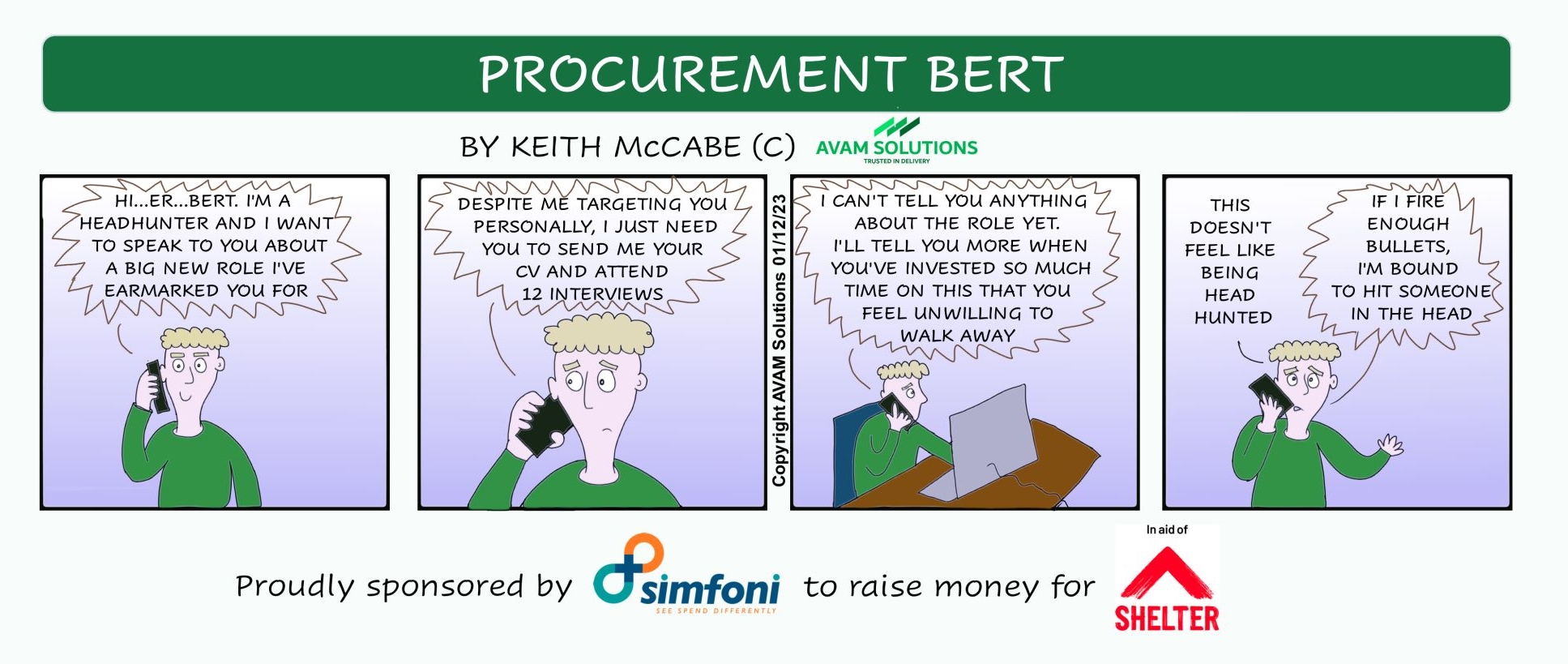

I’ll leave it to the CEO of OpenAI, Sam Altman to summarize what he is seeing in the majority of ChatGPT use cases: “Something very strange about people writing bullet points, having ChatGPT expand it to a polite email, sending it, and the sender using ChatGPT to condense it [back into] the key bullet points”.

At Simfoni, we have been using transformers, (the technology behind ChatGPT), since 2021 and they are truly revolutionizing our analytics products. We see plenty of benefits from this kind of Generative AI— helping buyers and suppliers negotiate more efficiently, helping sourcing managers run more effective sourcing projects, and helping end users find the items or services they need faster and with more accuracy.

Watch this space as Simfoni continues to explore the exciting potential of Generative AI and its applications in procurement, sourcing, and supply chain but with a cautious eye toward understanding its limitations and managing expectations.

Discover how Simfoni’s AI-powered procurement solutions can transform your procurement processes and drive greater efficiency and cost savings. Schedule a consultation today!

Alan Buxton

CTO at Simfoni

Simfoni

Follow Simfoni on LinkedIn